If you need to use put in other process, then you need to initialize values in QQueue with init. Process ( target = _process, args = ( qq ,)) p. get ()) if _name_ = "_main_" : qq = QQueue () p = multiprocessing. Operation when iterable is consumed but this not close queue, you need call to close() or to end() in this case): def _process ( qq ): print ( qq.

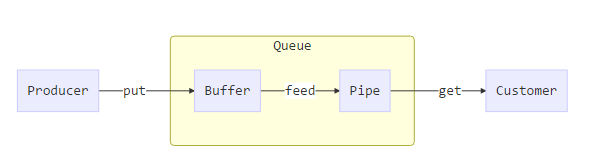

You can put all values in one iterable or several iterables with put_iterable method ( put_iterable perform remain Queue, you can call put_remain, then you need to call manually to close (or end, this performs close operation Note: you need to call end method to perform remain operation and close queue. end () # When end put values call to end() to mark you will not put more values and close QQueueĬomplete example (it needs import multiprocessing): def _process ( qq ): print ( qq. put ( "value" ) # Put all the values you need qq. Pseudocode without process: qq = QQueue () # > qq. Then subprocesses have elements very quickly. While Producer produce and put lists of elements in queue, subprocesses consume those lists and iterate every element, In other words, Multiprocess queue is pretty slow putting and getting individual data, then QuickQueue wrap severalĭata in one list, this list is one single data that is enqueue in the queue than is more quickly than put one To transfer data between python processes.īut if you put or get one list with elements work similar as put or get one single element this list is getting asįast as usually but this has too many elements for process in the subprocess and this action is very quickly. The motivation to create this class is due to multiprocessing.queue is too slow putting and getting elements Last release version of the project to install in: pip install quick-queue Information about multiprocessing.queue in Note that the function g does strictly more jobs than f, but its answers are much shorter.This is an implementation of Quick Multiprocessing Queue for Python and work similar to multiprocessing.queue (more def f(n):įrom multiprocessing import Process, Queue

Following the suggestion of I attached my code with an example. I would like to know if there is any hidden parameter to prevent the Python MultiProcessing module from producing long outputs in Sagemath. For the same computation, once I add a line to manually set the output to be something short, or extract a small part of the original output, then the computation no longer gets stck in the end, and that small part agrees with the original answer. What puzzles me is that the problem depends on the length of output, not the time of computation. However, the output still does not appear. I monitored the CPU usage, it peaks at first and then returns to zero, which means that the computation is complete. SageMath gets stuck after the computation if the output is long. When using MultiProcessing module for the same problem with the same input, everything works fine for short output, but there is a problem when the output is long. When using paralell decoration, everything works fine. I am using SageMath 9.0 and I tried to do paralle computation in two waysġ) parallel decoration built in SageMath

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed